BLOG

Date:

Reading Time:

08 Minutes

Author

Himanshu Srivastava

Most founders treat an AI MVP the same way they would treat a standard software MVP: define some features, assign a developer, ship in a few weeks. This is one of the most expensive misconceptions in early-stage product development.

A traditional software MVP can afford to be built incrementally. You add a feature, test it, move on. The product's core behaviour is deterministic — it does exactly what the code says, every time.

An AI MVP is fundamentally different. Here is why:

The intelligence layer must be part of the architecture from day one. You cannot build a standard app and bolt AI on later. The data pipeline, the model inference endpoint, the feedback loop — these need to be designed into the foundation, not retrofitted.

Your product's quality depends on data, not just code. A beautifully written codebase with poor training data produces a broken AI product. Data readiness is the first thing you must audit.

Post-launch maintenance is more complex. Traditional software breaks predictably. AI products experience model drift — their quality degrades over time as real-world data shifts away from training data. You need monitoring from day one.

The failure mode is different. A buggy traditional MVP crashes. A buggy AI MVP confidently gives wrong answers — and users stop trusting it fast.

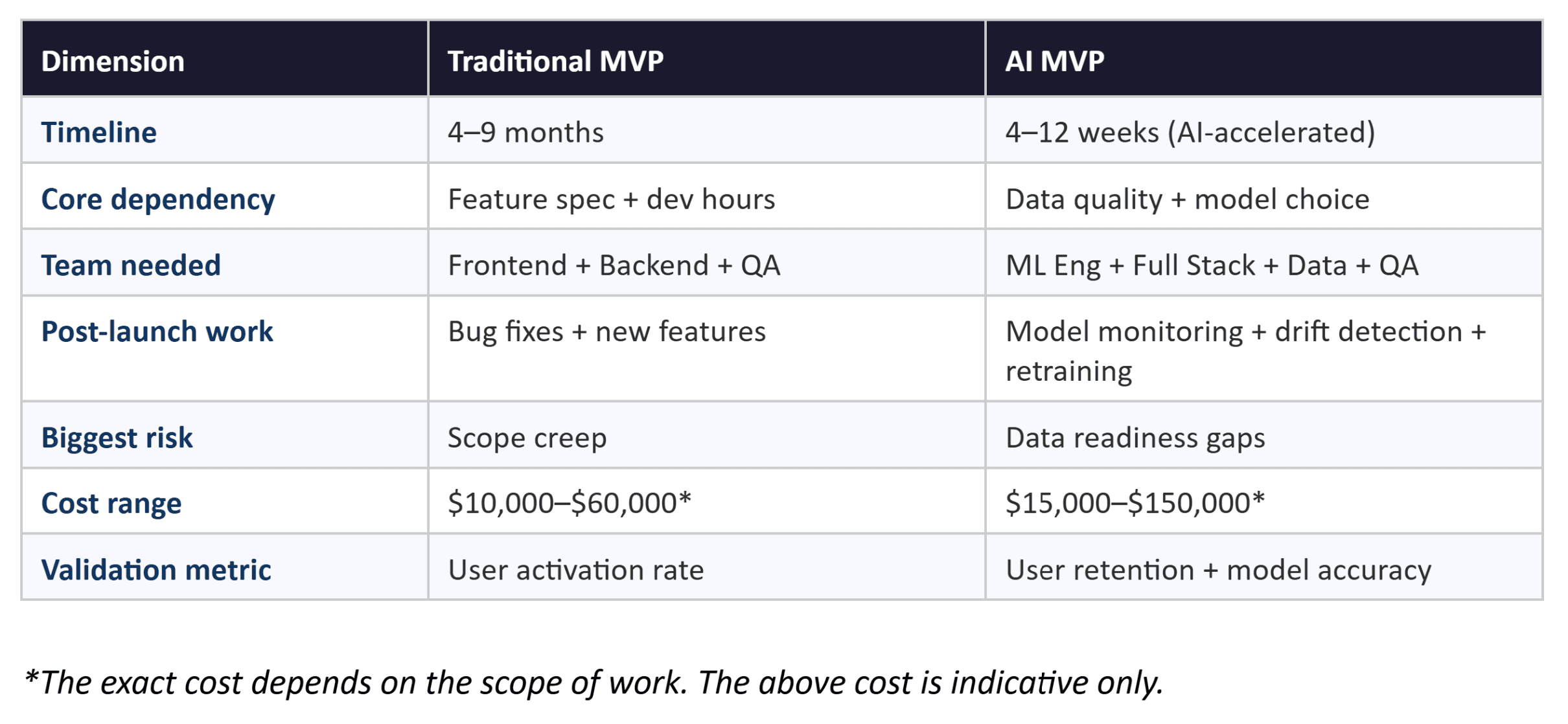

Here is a side-by-side comparison:

Key Takeaway

The single most important question before building an AI MVP is not 'what features do we need?' — it is 'do we have the data to make this work?' Teams with proper data readiness accelerate their build timelines by 30–40% according to industry analysis.

2. The 30-Day AI MVP Sprint: Week-by-Week Breakdown

This is the sprint structure we use at Neura Dynamics for Tier 1 and Tier 2 AI MVPs — the projects where 30 days is genuinely achievable. For Tier 3 complexity (multi-agent systems, custom fine-tuning), budget 8 to 12 weeks.

Each week has one non-negotiable deliverable. If you reach the end of a week without that deliverable, the 30-day timeline is already at risk.

Week 1 — Discovery and AI Scoping (Days 1–7)

This is the most underestimated week. Founders want to start coding on Day 1. The teams that actually ship in 30 days spend Day 1 to 7 doing something different.

The goal of Week 1 is to define the one core AI hypothesis your MVP will test. Not a feature list. One hypothesis. Something like: 'If we give a healthcare intake form to an LLM with patient history context, it will triage cases with 85% accuracy — saving nurses 4 hours per day.'

Activities during Week 1:

Problem definition session — define the user pain point in one sentence

AI use case validation — is this genuinely an AI problem, or can a simpler rule-based system solve it?

Data readiness audit — what data do you have, how clean is it, what is missing?

Model selection discussion — which LLM fits this task, what are the context window requirements?

Infrastructure decision — cloud provider, deployment environment, compliance requirements

Scope lock — agree on what is IN the MVP and what is OUT, and do not revisit this during the build

Week 1 Deliverable

Non-negotiable Week 1 deliverable: A signed-off Product Requirements Document (PRD) that includes the core AI hypothesis, data audit findings, chosen model, and a list of exactly what the MVP will and will not do.

Week 2 — Architecture and Prototype (Days 8–14)

With the PRD locked, Week 2 is about making the core AI interaction tangible before writing production code.

Activities during Week 2:

Backend scaffolding — set up project structure, environment variables, authentication foundation

Model integration — connect the chosen LLM via API, write the initial system prompt, test basic responses

RAG pipeline setup (if applicable) — index your documents into a vector store, test retrieval accuracy

UI prototype in Figma — build a clickable prototype of the core user flow, not a polished design

Prompt engineering iteration — test 10 to 20 prompt variations against your core hypothesis

Data pipeline — connect real data sources, validate that the model receives the correct context

Week 2 Deliverable

Non-negotiable Week 2 deliverable: A working prototype where the AI component is connected to real data and produces real outputs — not mocked responses. This is the moment you find out if your hypothesis holds.

Week 3 — Core Build and AI Integration (Days 15–21)

This is the heaviest engineering week. The prototype is validated, the scope is locked, and now you build the production-grade version of everything.

Activities during Week 3:

Full frontend build — implement all user-facing screens based on the validated Figma prototype

Backend API completion — all endpoints, database schema, authentication flows

AI inference layer — production-grade prompt management, streaming responses, error handling

Logging and telemetry — instrument every AI interaction from day one: what was the prompt, what was the response, did the user accept or reject it?

Response caching — cache frequent LLM responses using Redis to reduce API costs by 40 to 60%

Testing foundation — unit tests for core business logic, integration tests for AI endpoints

A note on AI coding tools: at Neura Dynamics, our engineers use GitHub Copilot and Cursor throughout the build phase. For boilerplate code — authentication flows, CRUD operations, API endpoint scaffolding — these tools reduce coding time by 40 to 60%. The time saved gets reinvested into prompt engineering and AI quality testing, which are still fully human tasks.

Week 3 Deliverable

Non-negotiable Week 3 deliverable: A functional MVP running in a staging environment with real data flowing through the AI layer, basic telemetry active, and the core user journey completable end-to-end.

Week 4 — Testing, Guardrails, and Launch (Days 22–30)

Week 4 is where AI MVPs either earn user trust or destroy it. Rushing this week is the most common reason AI products get abandoned within 60 days of launch.

Activities during Week 4:

Hallucination testing — systematically test edge cases where the model might confidently produce wrong outputs. Document your acceptable hallucination rate threshold.

Guardrails implementation — add output validation, content filtering, and fallback responses for when the model fails

Model drift monitoring setup — configure LangSmith or Arize AI to track inference quality against benchmarks

Beta user onboarding — get 10 to 20 real users into the product, observe where they get confused or where the AI fails them

Performance optimisation — check response latency, set up CDN caching for static assets, validate API rate limit handling

Production deployment — final deployment to production environment, DNS configuration, SSL, monitoring dashboards live

Week 4 Deliverable

Non-negotiable Week 4 deliverable: A live MVP with real users, a monitoring dashboard tracking AI quality metrics, and a documented pivot trigger — the specific metric threshold at which you will revisit your core hypothesis.

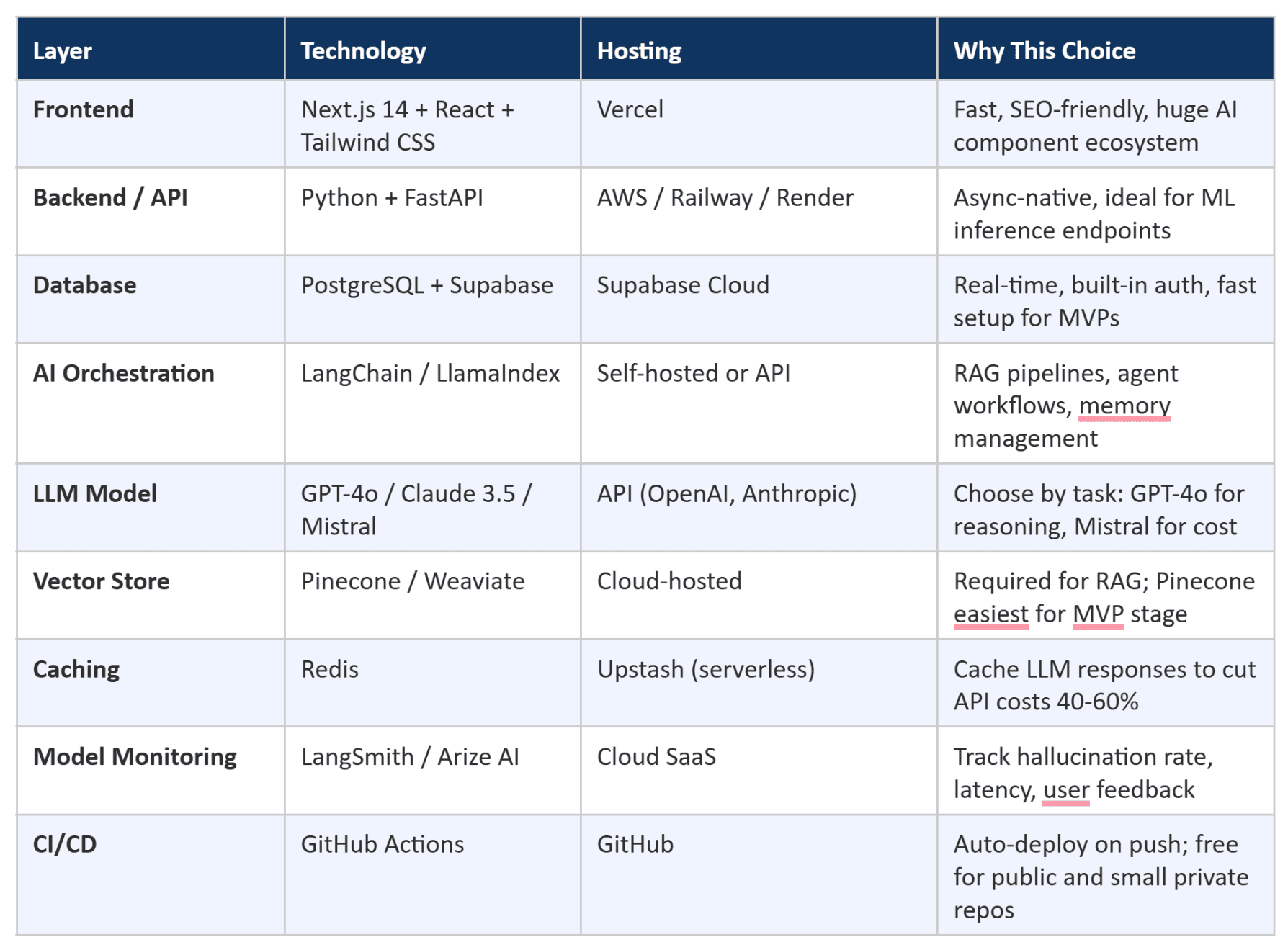

3. The Recommended AI MVP Tech Stack in 2026

Stack decisions at the MVP stage are high-stakes — not because the wrong choice breaks the product immediately, but because the wrong choice creates technical debt that makes scaling painful and expensive six months later.

The stack below is what we at Neura Dynamics default to for AI MVPs. Each choice is optimised for: fast initial setup, AI-native architecture, reasonable cost at low traffic, and a clear path to scaling.

Frontend Layer: Next.js 14 + React + Tailwind CSS

Next.js gives you server-side rendering out of the box, which matters for SEO if your product has public-facing pages. It also has native support for streaming API responses — critical when you want to show LLM output character-by-character rather than waiting for the full response. Vercel deployment means zero DevOps overhead at the MVP stage.

Backend and API Layer: Python + FastAPI

Python is the lingua franca of AI/ML engineering. FastAPI is asynchronous by default, which is essential when you are making concurrent calls to LLM APIs and database queries. For MVPs that do not require heavy ML work, Node.js with Express is a valid alternative — but as soon as you need to run inference pipelines or data processing, Python wins.

AI Orchestration: LangChain or LlamaIndex

These frameworks handle the plumbing between your application and the underlying model: conversation history management, RAG pipeline coordination, tool calling, and agent orchestration. LangChain has a larger ecosystem and more tutorials. LlamaIndex is often faster to set up for pure RAG use cases. Do not try to build this plumbing yourself at the MVP stage — the frameworks have solved problems you have not encountered yet.

When to Choose RAG vs Fine-Tuning

RAG (Retrieval-Augmented Generation) — use this when your AI needs to answer questions based on specific documents, databases, or proprietary knowledge. Faster to set up, cheaper, and easier to update when information changes. This is the right choice for 80% of AI MVPs.

Fine-tuning — use this when you need the model to consistently adopt a specific tone, format, or domain-specific language that RAG alone cannot achieve. Fine-tuning is slower, more expensive, and requires labelled training data. Rarely necessary at the MVP stage.

Model Monitoring: Non-Negotiable from Day One

This is the step most MVP teams skip because it feels like a 'later' problem. It is not. Without model monitoring, you will not know when your AI starts degrading — and it will degrade as real-world usage patterns diverge from your test scenarios. LangSmith integrates directly with LangChain. Arize AI works with any model. Set up at least basic logging (prompt in, response out, user feedback) before your first real user touches the product.

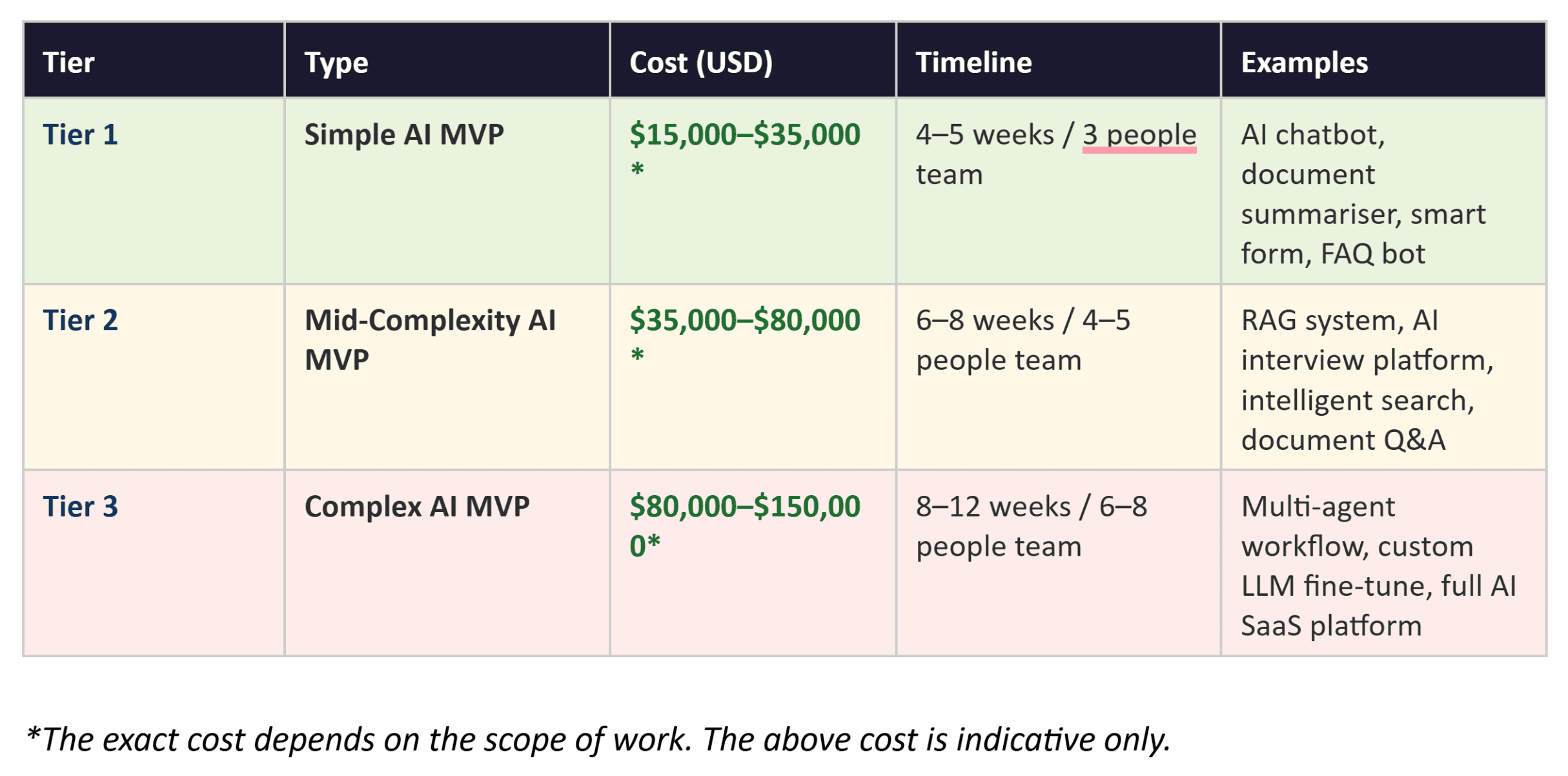

4. AI MVP Cost Breakdown: What You Actually Pay in 2026

Cost transparency is rare in the AI consulting industry. Most agencies give vague ranges or exclude critical cost categories until the project is already underway. This section gives you real numbers based on our delivery experience and 2026 market rates.

One critical upfront note: AI features add 15 to 30% to a development budget compared to traditional software MVPs of the same scope. This premium comes from data preparation, model evaluation, guardrail engineering, and monitoring infrastructure — costs that standard software does not have.

How to Read These Tiers

These tiers assume a team based in India (like Neura Dynamics) working with well-prepared requirements. US or UK-based agencies typically charge 2.5 to 4x these rates for equivalent scope. Eastern European agencies run roughly 1.5 to 2x. The quality difference at this price differential is rarely justified at the MVP stage.

The Hidden Costs Teams Always Underestimate

After reviewing dozens of AI MVP post-mortems, here are the cost categories that consistently cause budget overruns:

Data preparation (20–30% of total budget): Cleaning, labelling, and structuring your data for AI consumption almost always takes longer and costs more than planned. If you are starting with messy CRM data, PDFs in different formats, or unstructured text, budget for this explicitly.

LLM API costs at scale: GPT-4o costs approximately $2.50 per million input tokens and $10.00 per million output tokens. For a product with 500 daily active users making 20 queries each, this adds up quickly. Model your expected API costs before you choose your production model.

Model monitoring and post-launch iteration: Plan for 20 to 30% of your initial build cost as a post-launch budget for the first 90 days. This covers monitoring costs, prompt refinements, edge case fixes, and the first retraining cycle if needed.

Compliance and security (for regulated industries): HIPAA compliance for healthtech or SOC 2 for enterprise SaaS adds 30 to 100% to your timeline and budget. This is non-negotiable and cannot be retrofitted cheaply.

Budget Planning Rule

The 30% rule: plan for your post-launch iteration budget to be at least 30% of your initial build cost. Teams that do not budget for this run out of runway before their product reaches product-market fit.

5. Real Example: How We Built an AI Teacher Bot MVP in Under 30 Days

Abstract frameworks are useful. Real project stories are better.

One of our most instructive AI MVP deliveries was the AI Teacher Bot for an edtech platform — an intelligent student support assistant that could answer curriculum questions, explain concepts at different difficulty levels, and flag students who were falling behind.

The Starting Point

The client came to us with a clear pain point: their human tutors were spending 60% of their time answering repetitive questions that could be answered from the course material. They wanted an AI solution that could handle these queries automatically while escalating genuinely complex questions to human tutors.

Critically, they had already digitised their entire course curriculum — 800 PDF documents, 200 hours of video transcripts, and a structured exercise bank. This data readiness was the reason a 30-day timeline was even possible.

Key Technical Decisions

Model choice: We used GPT-4o for question answering — its reasoning capability on educational content outperformed alternatives in our initial benchmarking. For the difficulty-level adaptation feature (explaining concepts in simpler or more advanced terms), we used a separate system prompt layer rather than fine-tuning, which saved three weeks of work.

Architecture: RAG pipeline built with LlamaIndex, indexing the full curriculum into Pinecone. The retrieval step fetched the three most relevant curriculum chunks for each student question, then passed them to GPT-4o as context. This kept the model grounded in actual course content rather than hallucinating explanations.

Monitoring: We instrumented every student interaction from day one. Was the AI answer accepted, or did the student immediately ask for a follow-up? Did the student escalate to a human tutor after receiving an AI answer? These two signals became our primary quality metrics.

The Outcome

The MVP launched on Day 28 with a cohort of 200 students. Within the first two weeks of live operation, the AI handled 74% of student questions without human escalation. Tutor time spent on repetitive queries dropped from 60% to under 20%. The client used these results to raise their next funding round.

The full case study is available in our Case Studies section on the Neura Dynamics website, including the full architecture diagram and post-launch metric progression

What Made This Work

The reason this MVP shipped in 28 days was not the tools we used or the size of the team. It was that the client had clean, structured data ready before Week 1 began. Data readiness is the single greatest accelerator — and the single greatest bottleneck — in AI product development.

6. Five Mistakes That Kill AI MVPs Before They Launch

These are patterns we see repeatedly. Each one is avoidable.

Mistake 1: Skipping the data readiness audit. Teams start building their AI layer before anyone has assessed whether their data is actually good enough to use. You discover the problem in Week 3 when the model produces garbage outputs, and you lose two to three weeks cleaning data you should have cleaned before the sprint started.

Mistake 2: Choosing the wrong model for the use case. GPT-4o is not always the right answer. For high-volume, cost-sensitive applications, Mistral or Claude Haiku may deliver 80% of the quality at 20% of the cost. For tasks requiring deep reasoning over complex documents, GPT-4o or Claude 3.5 Sonnet is worth the premium. Benchmark three models against your actual use case in Week 1, not Week 4.

Mistake 3: No hallucination guardrails in production. The most damaging thing that can happen to an AI product is confidently wrong output that a user acts on. In healthcare, legal, or financial contexts, this can cause real harm. In any context, it destroys user trust permanently. Build output validation, source attribution, and fallback responses before going live — not as a post-launch patch.

Mistake 4: Feature creep after scope lock. 'Can we also add X?' is the sentence that kills 30-day timelines. Every feature added after Week 1 scope lock extends the timeline by more than it appears to — because it introduces integration complexity, new edge cases, and additional testing. Lock scope in Week 1 and treat all new requests as a post-MVP backlog item.

Mistake 5: No pre-defined pivot trigger. Before you launch, decide: if X metric does not reach Y by Day 30 post-launch, we will revisit our core hypothesis. Without this decision made in advance, teams spend months optimising a product whose core assumption was wrong. Common pivot triggers: activation rate below 20% after 200 sign-ups, 7-day retention below 25%, AI answer acceptance rate below 50%.

7. Should You Build In-House or Work with an AI Development Company?

This is the question every founder asks before committing to a build approach. Here is an honest answer.

Build in-house when:

You have three or more experienced engineers already on your payroll, including at least one with ML engineering experience

Your AI product requires deep, proprietary model development that you cannot afford to have an external party know about

You are building a long-term platform where the AI capability is your core competitive moat — meaning you will be continuously iterating on it for years

You have an extended runway (12+ months) and are not under time pressure to validate the concept quickly

Work with an AI development company when:

You need to ship in 30 to 90 days and do not have the internal team to do it

Your team has strong domain expertise but limited AI engineering experience

You want a working MVP to validate before committing to a full in-house AI team

Your MVP scope involves integrations, compliance requirements, or infrastructure decisions that require specialised experience

The economic argument for an agency at the MVP stage is straightforward: hiring a senior ML engineer in India costs INR 25 to 45 lakh per year. A full AI MVP from a specialist agency at a comparable quality level typically costs less than two months of that engineer's salary — and takes less time to get to market than the recruitment process.

Agency vs In-House

The right answer is rarely permanent. Many companies that start with an agency for their MVP later bring engineering in-house once the core product hypothesis is validated and they know exactly what skills they need. These are not mutually exclusive paths.

8. Frequently Asked Questions

Can an AI MVP really be built in 30 days?

Yes — for Tier 1 scope (single use case, clean data, no compliance requirements) with an experienced team. The 30-day timeline is achievable for projects like AI chatbots, document Q&A systems, or intelligent form assistants. Multi-agent systems, custom fine-tuned models, or products with complex data pipelines typically need 8 to 12 weeks even with an experienced team.

What is the minimum budget for an AI MVP?

Based on 2025–2026 market data, the realistic minimum for a production-ready AI MVP (not a prototype or demo) is approximately $15,000 to $20,000*. Below this figure, you are either building a very limited proof-of-concept or working with a team that lacks the AI engineering experience to build something production-ready.

*The exact cost depends on the scope of work. The above cost is indicative only.

Do I need my own data to build an AI product?

Not always — but your own data dramatically improves output quality and relevance. For a general-purpose chatbot, a pre-trained LLM like GPT-4o works well with just a strong system prompt. For domain-specific applications (medical, legal, financial, technical), your own data — whether as a RAG knowledge base or fine-tuning dataset — is what separates a generic AI tool from a genuinely useful product.

What is the difference between a PoC and an AI MVP?

A Proof of Concept (PoC) demonstrates that an idea is technically feasible. It uses mocked data, has no production infrastructure, and is not meant for real users. An MVP is a production-grade product that real users interact with, built to validate a business hypothesis — not just a technical one. A PoC typically takes 1 to 2 weeks. An MVP takes 4 to 12 weeks depending on complexity.

How do I know if my AI MVP idea is worth building?

Three questions to answer before you start: First, can you describe the specific user behaviour change you expect the AI to enable — and can you measure it? Second, do you have or can you acquire the data the AI needs within your timeline? Third, have you talked to at least 10 potential users and confirmed they would pay or significantly change their workflow for this product? If you cannot answer all three clearly, spend another week in discovery before touching code.

What happens if the AI does not perform well enough after launch?

This is normal and expected — it is the whole point of building an MVP before a full product. The important thing is to have defined what 'good enough' means before launch (your pivot trigger metric), to have telemetry running from day one so you know exactly what is failing and why, and to have budgeted for a post-launch iteration sprint. Most AI products require two to three rounds of prompt engineering and data quality improvements before they hit a quality level that drives strong retention.

Ready to Build Your AI MVP?

At Neura Dynamics, we have shipped AI MVPs across edtech, healthtech, fintech, retail, and enterprise SaaS. We run a structured 30-day sprint for Tier 1 and Tier 2 builds, with full-stack delivery covering everything from data pipeline architecture to production deployment and model monitoring.

If you are evaluating whether to build an AI product, we offer a free 30-minute scoping call where we will tell you: whether your idea is the right scope for a 30-day build, what your data readiness gaps are, what a realistic budget looks like for your specific use case, and which tech stack we would recommend for your requirements.

No sales pitch. No proposal before we have listened. Just a direct conversation with an AI engineer who has built what you are trying to build.

Book your free scoping call with experts.

Author

Himanshu is the Founder of Neuradynamics and a seasoned Full Stack Developer with 15+ years of experience in application development, cloud infrastructure, automation, and scalable digital solutions. With expertise across Python, Django, AWS, Azure, and AI-powered systems, he shares practical insights on modern technology, software architecture, and digital transformation.